I am a final-year Ph.D. student in Machine Learning at the Georgia Institute of Technology, advised by Prof. Faramarz Fekri.

My research focuses on improving long-horizon reasoning and decision-making in large language models. I study post-training and system design for LLM agents, with a focus on deliberate reasoning and planning, as well as scalable agent systems that support adaptive retrieval and long-context interaction.

🚀 Featured Projects

Long-Horizon LLM Agents

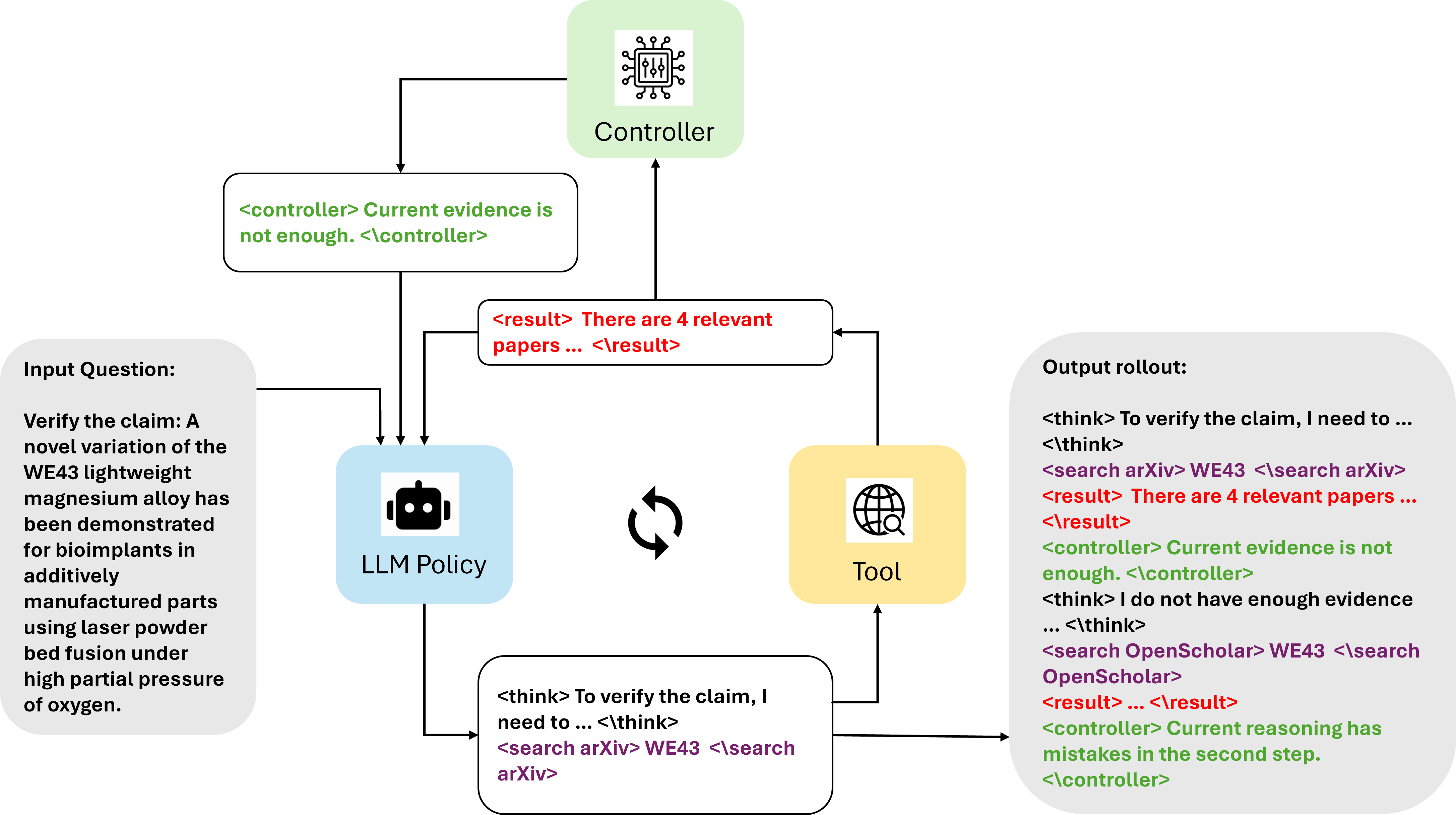

Scaling Search-Augmented Reasoning Agents via Adaptive Information Control

Siheng Xiong, Oguzhan Gungordu, James C. Kerce, Faramarz Fekri

DeepControl is a post-training framework for search-augmented LLM agents that adaptively controls retrieval and expansion based on the agent’s reasoning state, improving long-horizon reasoning performance across diverse benchmarks.

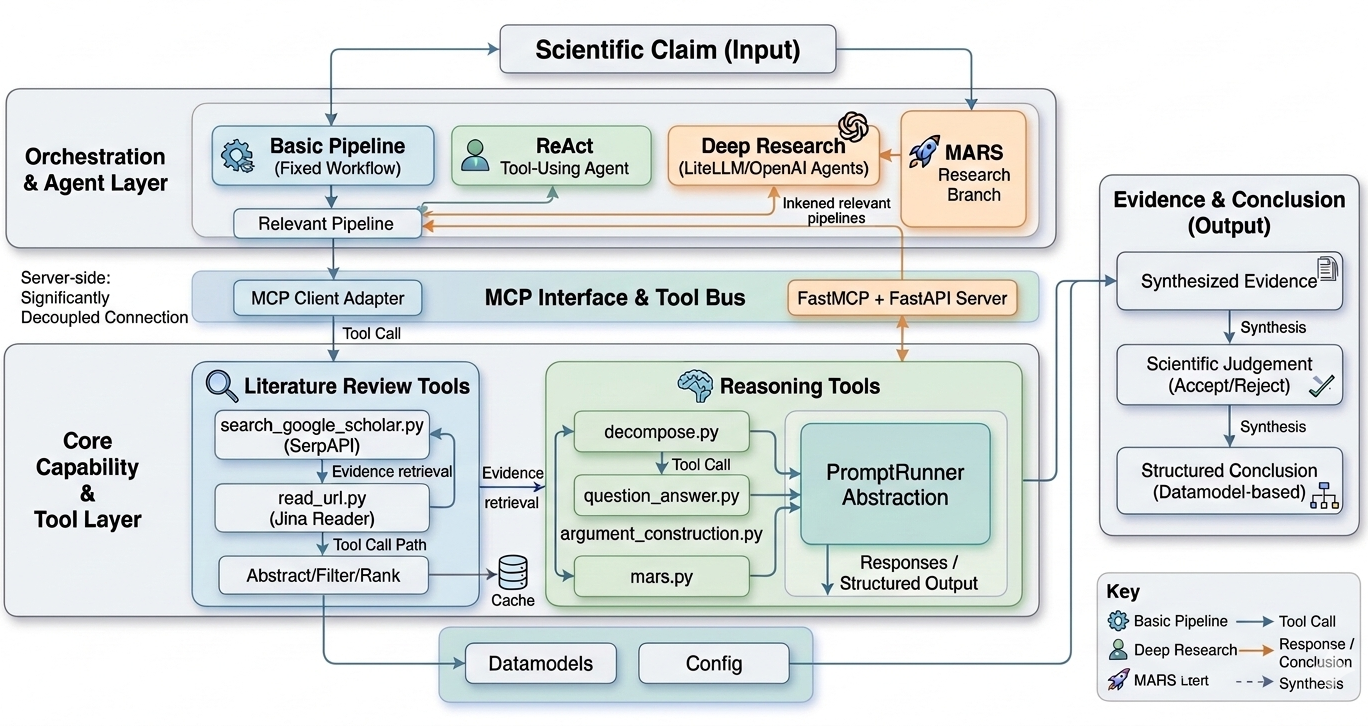

Evidence-Based Expert-Level Scientific Claim Verification

Siheng Xiong, Oguzhan Gungordu, James C. Kerce, Faramarz Fekri

DeepVerify is an agentic framework for expert-level scientific claim verification, combining search, tool use, and structured reasoning to ground decisions in retrieved evidence.

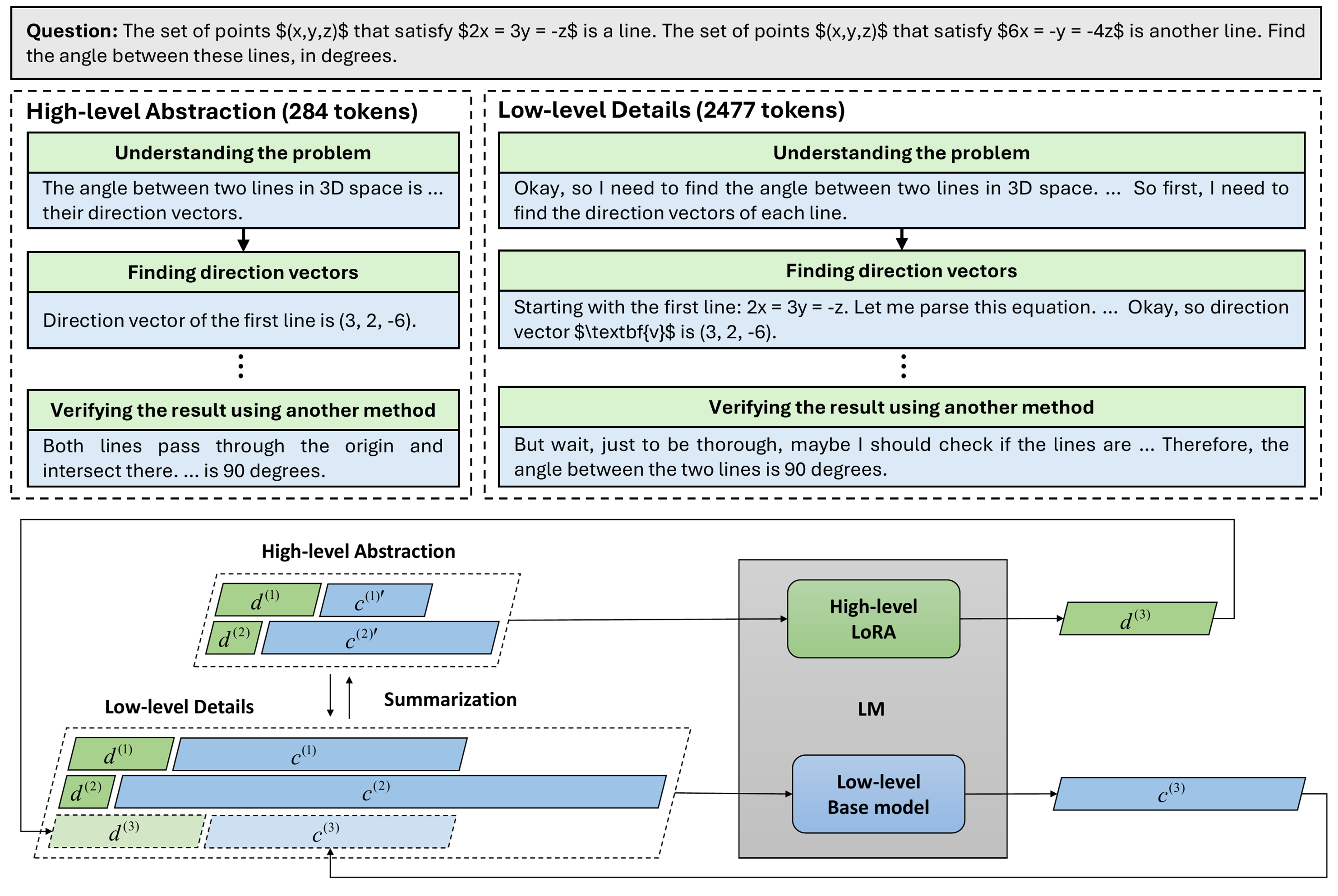

Enhancing Language Model Reasoning with Structured Multi-Level Modeling

Siheng Xiong, Ali Payani, Faramarz Fekri

MLR is a lightweight planner-executor framework for long-horizon reasoning, combining multi-level decomposition with scalable step-level supervision to improve both accuracy and training stability.

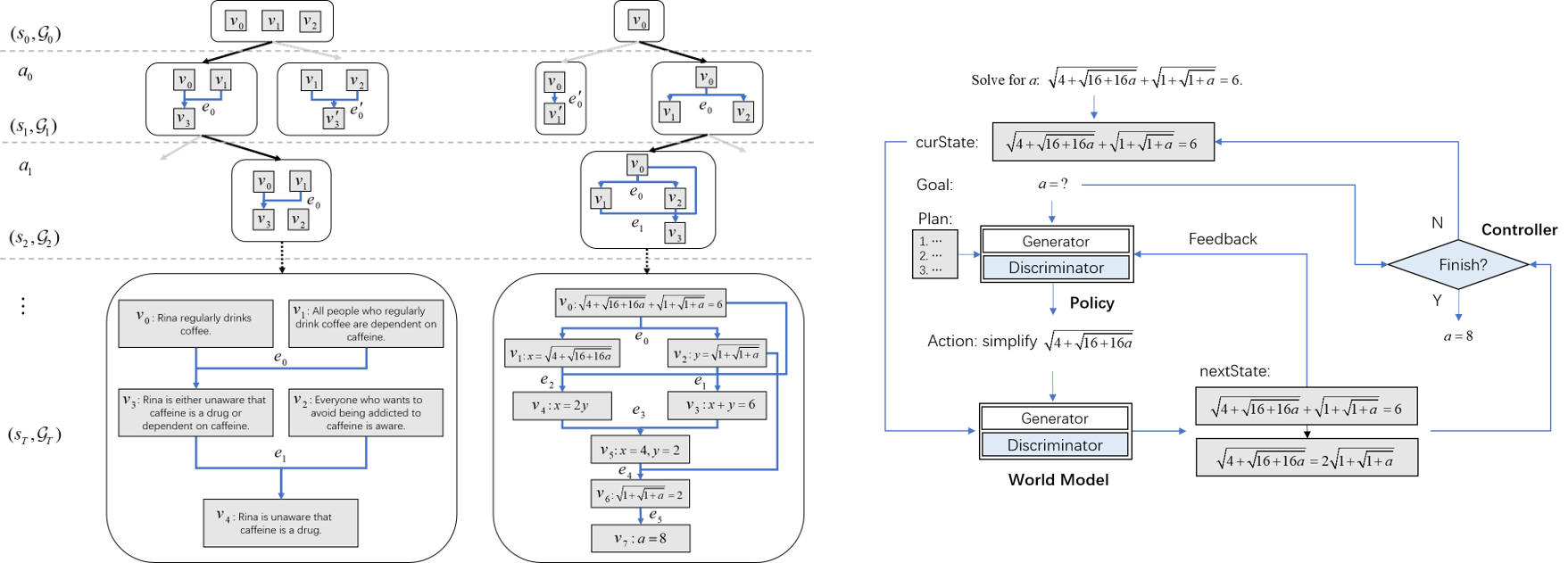

Deliberate Reasoning in Language Models as Structure-Aware Planning with an Accurate World Model

Siheng Xiong, Ali Payani, Yuan Yang, Faramarz Fekri

SWAP frames multi-step reasoning as structure-aware planning, where a world model predicts structured future states to guide action selection.

Long-Context and Memory Systems

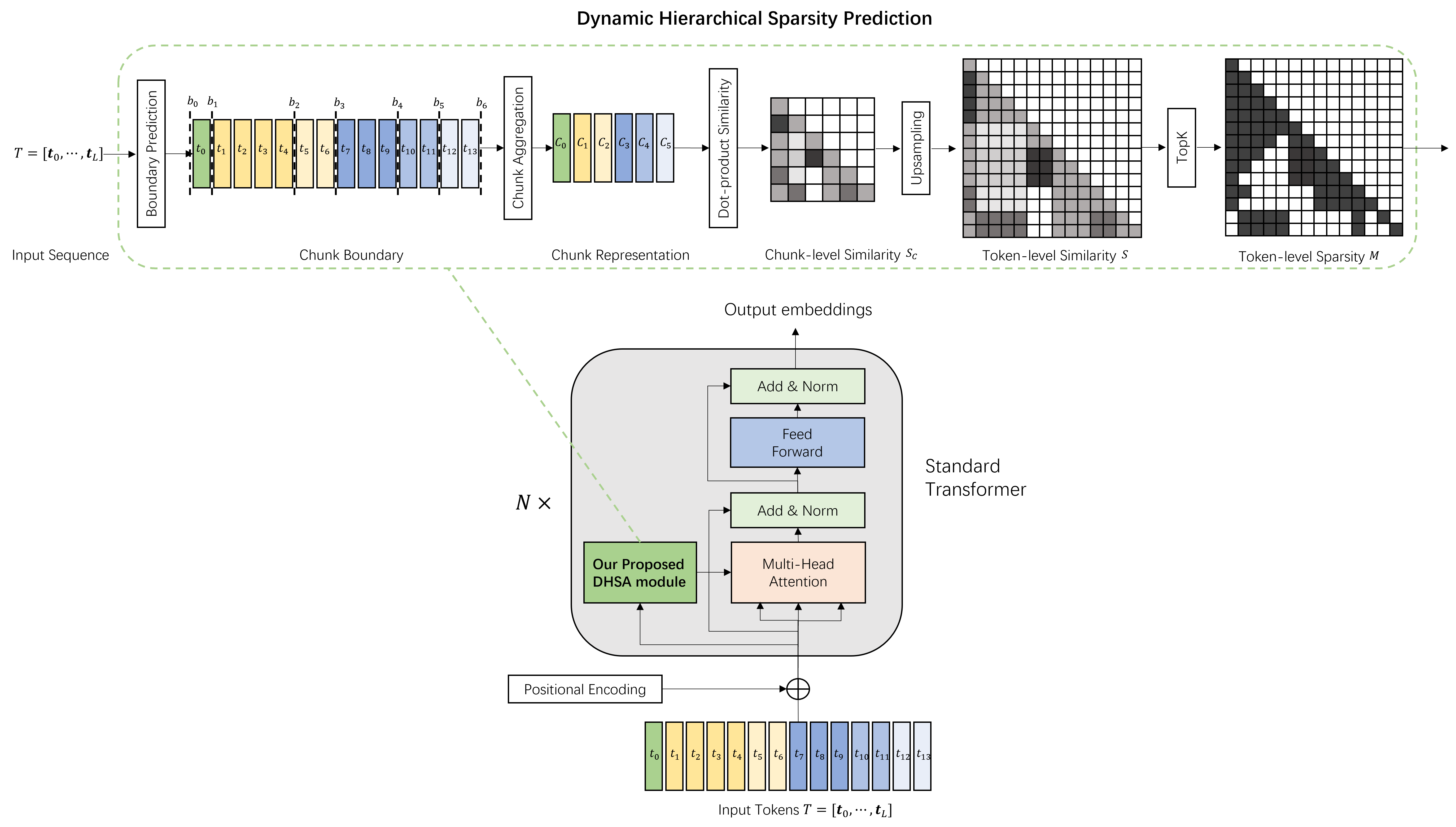

Long-Context Modeling with Dynamic Hierarchical Sparse Attention for Memory-Constrained LLM Inference

Siheng Xiong, Joe Zou, Faramarz Fekri, Yae Jee Cho

DHSA is an efficient sparse attention framework for long-context LLM inference that reduces prefill cost and memory usage while preserving accuracy under tight device constraints.

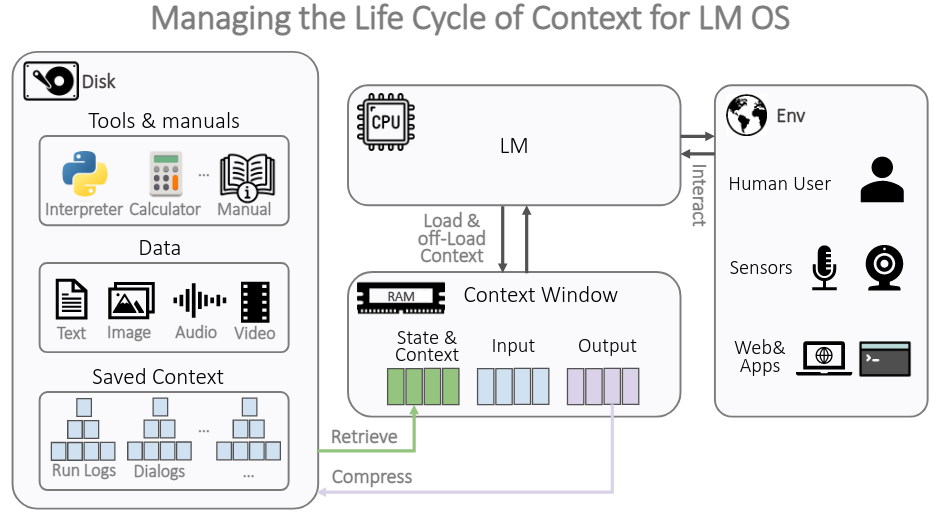

The Compressor-Retriever Architecture for Language Model OS

Yuan Yang, Siheng Xiong, Ehsan Shareghi, Faramarz Fekri

Compressor-Retriever is a model-agnostic architecture for lifelong context management in LLM-based systems, using the base model’s forward pass to compress and retrieve memory while remaining fully differentiable.

📝 Selected Publications

📝 Conference Papers

ICML 2026(Spotlight) Long-Context Modeling with Dynamic Hierarchical Sparse Attention for Memory-Constrained LLM Inference Siheng Xiong, Joe Zou, Faramarz Fekri, Yae Jee Cho [Repo]ICML 2026Planning through World Model for Automated Heuristic Design via Self-Evolving LLMs Oguzhan Gungordu, Siheng Xiong, Faramarz Fekri [Repo]ICLR 2026Enhancing Language Model Reasoning with Structured Multi-Level Modeling Siheng Xiong, Ali Payani, Faramarz Fekri [Repo]ACS 2025Deliberate Planning in Language Models with Symbolic Representation Siheng Xiong, Zhangding Liu, Jieyu Zhou, Yusen Su [Repo]ACL 2025 (main)Deliberate Reasoning in Language Models as Structure-Aware Planning with an Accurate World Model Siheng Xiong, Ali Payani, Yuan Yang, Faramarz Fekri [Repo]NAACL 2025 (main)CausalEval: Towards Better Causal Reasoning in Language Models Longxuan Yu*, Delin Chen*, Siheng Xiong*, Qingyang Wu, Dawei Li, Zhikai Chen, Xiaoze Liu, Liangming Pan [Repo]EMNLP 2024Can LLMs Reason in the Wild with Programs? Yuan Yang, Siheng Xiong, Ali Payani, Ehsan Shareghi, Faramarz Fekri [Repo]IJCAI 2024Temporal Inductive Logic Reasoning over Hypergraphs Yuan Yang, Siheng Xiong, Ali Payani, James C Kerce, Faramarz Fekri [Repo]ACL 2024 (main)Large Language Models Can Learn Temporal Reasoning Siheng Xiong, Ali Payani, Ramana Kompella, Faramarz Fekri [Repo]ACL 2024 (main)Harnessing the power of large language models for natural language to first-order logic translation Yuan Yang, Siheng Xiong, Ali Payani, Ehsan Shareghi, Faramarz Fekri [Repo]AAAI 2024(Oral) TEILP: Time prediction over knowledge graphs via logical reasoning Siheng Xiong, Yuan Yang, Ali Payani, James C Kerce, Faramarz Fekri [Repo]ICLR 2023TILP: Differentiable learning of temporal logical rules on knowledge graphs Siheng Xiong, Yuan Yang, Faramarz Fekri, James Clayton Kerce [Repo]

📝 Workshop Papers

ICLR 2026 @ SPOTScaling Search-Augmented Reasoning Agents via Adaptive Information Control Siheng Xiong, Oguzhan Gungordu, James C. Kerce, Faramarz Fekri [Repo]NeurIPS 2025 @ ERLong-Context Modeling with Dynamic Hierarchical Sparse Attention for On-Device LLMs Siheng Xiong, Joe Zou, Faramarz Fekri, Yae Jee Cho [Repo]

📝 Preprints

Preprint 2026Adaptive Information Control for Search-Augmented LLM Reasoning Siheng Xiong, Oguzhan Gungordu, James C. Kerce, Faramarz Fekri [Repo]Preprint 2025Enhancing Long Chain-of-Thought Reasoning with Multi-Path Planning with Aggregation Siheng Xiong, Ali Payani, Faramarz Fekri [Repo]Preprint 2024The Compressor-Retriever Architecture for Language Model OS Yuan Yang, Siheng Xiong, Ehsan Shareghi, Faramarz Fekri [Repo]

🎖 Honors and Awards

- Georgia Tech CSIP Outstanding Research Award

- China National Scholarship (Top 1% Rank)

- China UHV Scholarship (Top 1% Rank)

- Kaggle Santander Value Prediction Challenge, Silver Medal (Top 3.4% Rank)

📖 Education

- Georgia Institute of Technology, Ph.D. in Machine Learning

- Shanghai Jiao Tong University, M.S. in Electrical and Computer Engineering

- Xi’an Jiaotong University, B.S. in Electrical and Computer Engineering

💻 Experience

- 2025.05 - 2025.08, Applied Research Intern, Google, Sunnyvale, California

- 2023.09 - 2024.04, Research Intern, Cisco Research, San Jose, California

- 2020.05 - 2021.01, Research Student Assistant, Rutgers University (Mentor: Dimitris N. Metaxas), New Brunswick, New Jersey

📄 Services

- Program Committee for AAAI

- Conference Reviewer for NeurIPS, ICML (Gold Reviewer), ICLR, ACL, EMNLP, NAACL, EACL, KDD, AAAI, ECML

- Journal Reviewer for TMLR, ACM TIST